What are therapy apps doing with your data?

Talkspace, Crisis Text Line, and Better Help don’t keep things quite as private as you might think.

Published:

Updated:

Related Articles

-

-

Music you can really feel — no, really

-

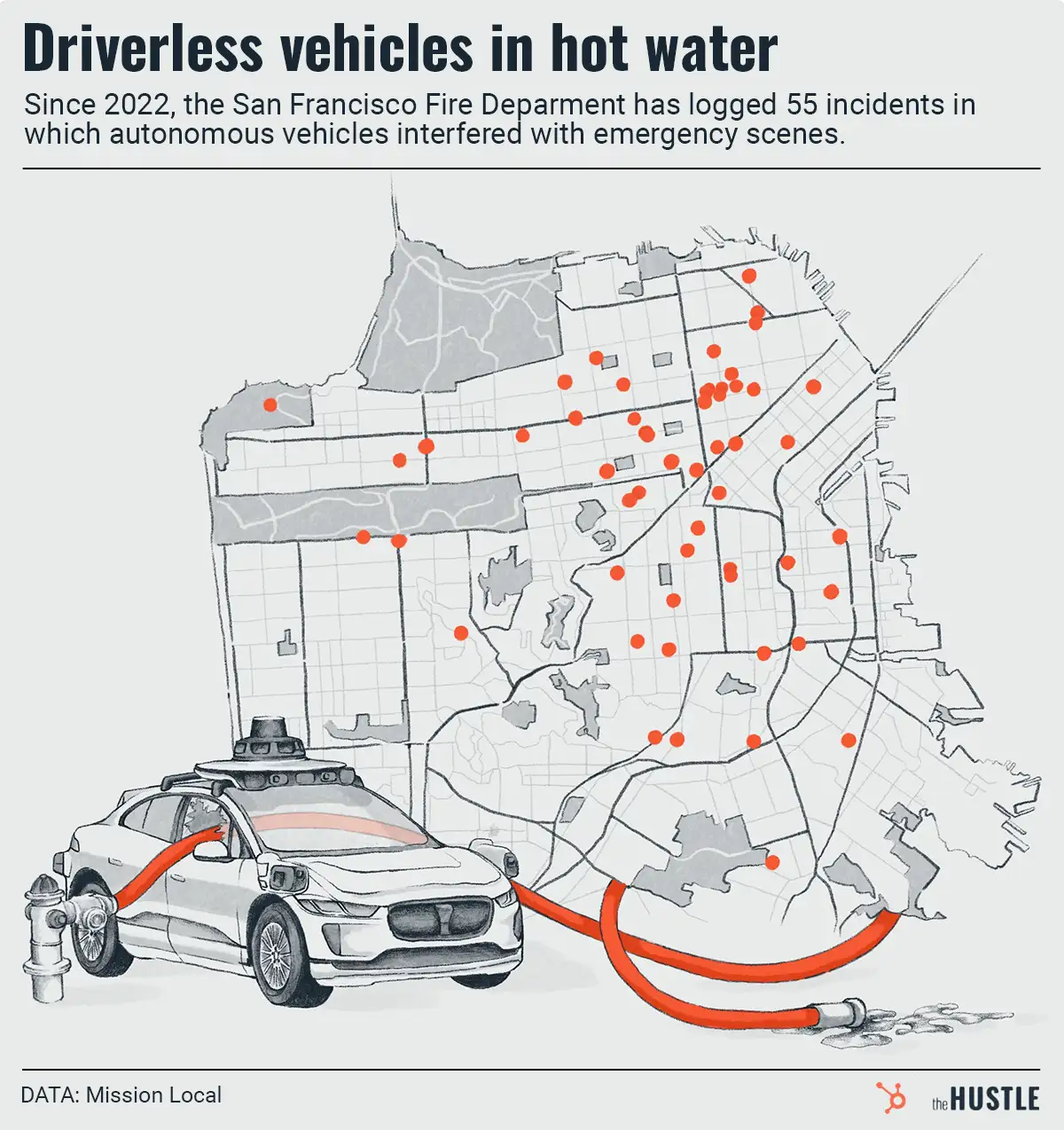

Where there’s smoke, there’s an autonomous vehicle blocking a fire

-

Meta vs. Canada is a long pattern of dismantling news

-

Is the ‘Big One’ about to drop on Amazon?

-

Don’t pretend you aren’t interested in the world’s largest passenger elevator

-

TikTok’s new plan to avoid getting banned in the US

-

France vs. social media dishonesty

-

The next frontier in facial-recognition tech: Not stealing dead people’s pics, ideally

-

Canada’s drug experiment