Facebook’s plan to crack down on porn: Call in the Red Team

Dick pics might help the tech giant build better algorithms.

Published:

Updated:

Related Articles

-

-

The government vs. AI deepfakes

-

Big Tech power rankings: Where the 5 giants stand to start 2024

-

The government can read your push notifications

-

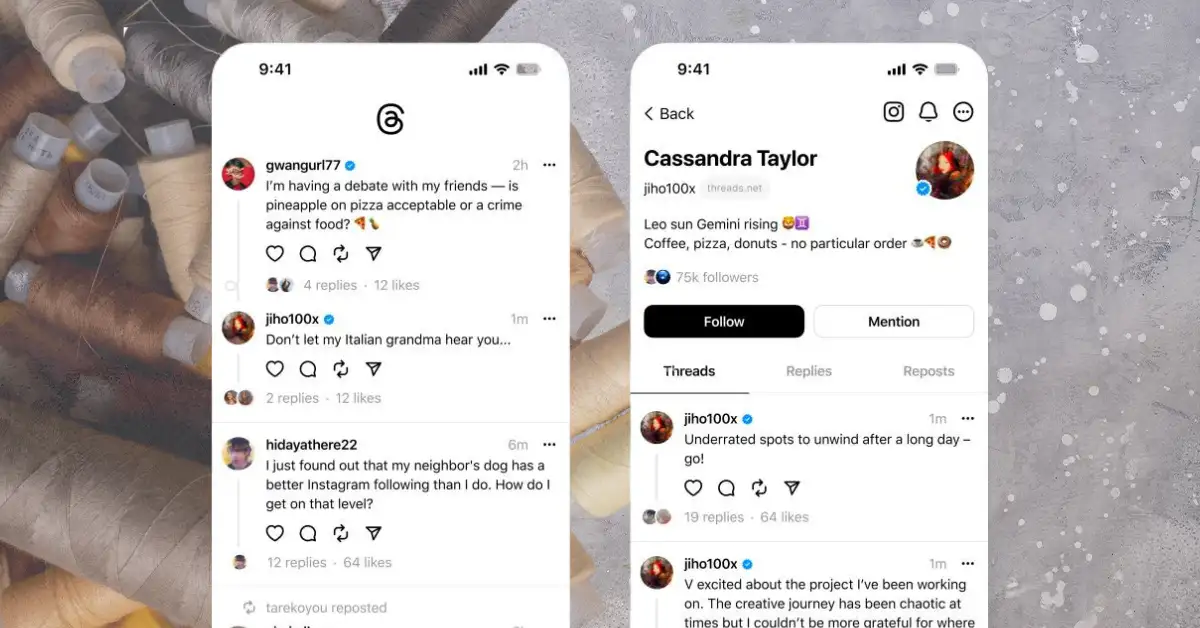

Threads’ golden opportunity is here — Meta doesn’t seem too interested in taking it

-

Music you can really feel — no, really

-

Meta vs. Canada is a long pattern of dismantling news

-

Meta’s latest metamorphosis is a mixed bag

-

Enshittification just keeps happening

-

We tried Threads, Meta’s Twitter competitor